"If you cannot measure it,you can not improve it"- Lord Kelvin

The field of machine learning is progressing at a break-neck speedandnew algorithms and techniques as well as performance improvements are being published at such a high frequency that it is impractical to keep pace.As expected,the research community, which in this field includes several corporate entities in addition to academic establishments, is open about its research findings, butthe real hurdle in democratizing machine learningare the engineering issues that one is likely to face in moving ML from the lab to production.

The only open-source project that is making a serious attempt at solving the engineering issues of reliably scaling and deploying ML workloads isKubeflow.

Kubeflow is dedicated to making deployments of machine learning workflows onKubernetessimple, portable and scalable.

However, till very recently, the Kubeflow project did not have any benchmarking components thus making it impossible to evaluate the performance of the system when deployed on any underlying Kubernetes cluster. We at Cisco took the lead in working with the open source community to come up with a solution to this. We realized that whenever there is talk of performance, any enterprise needs to get clarity on at least the following issues:

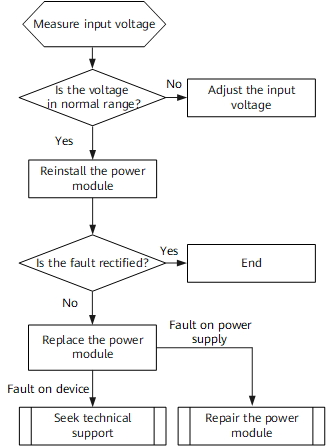

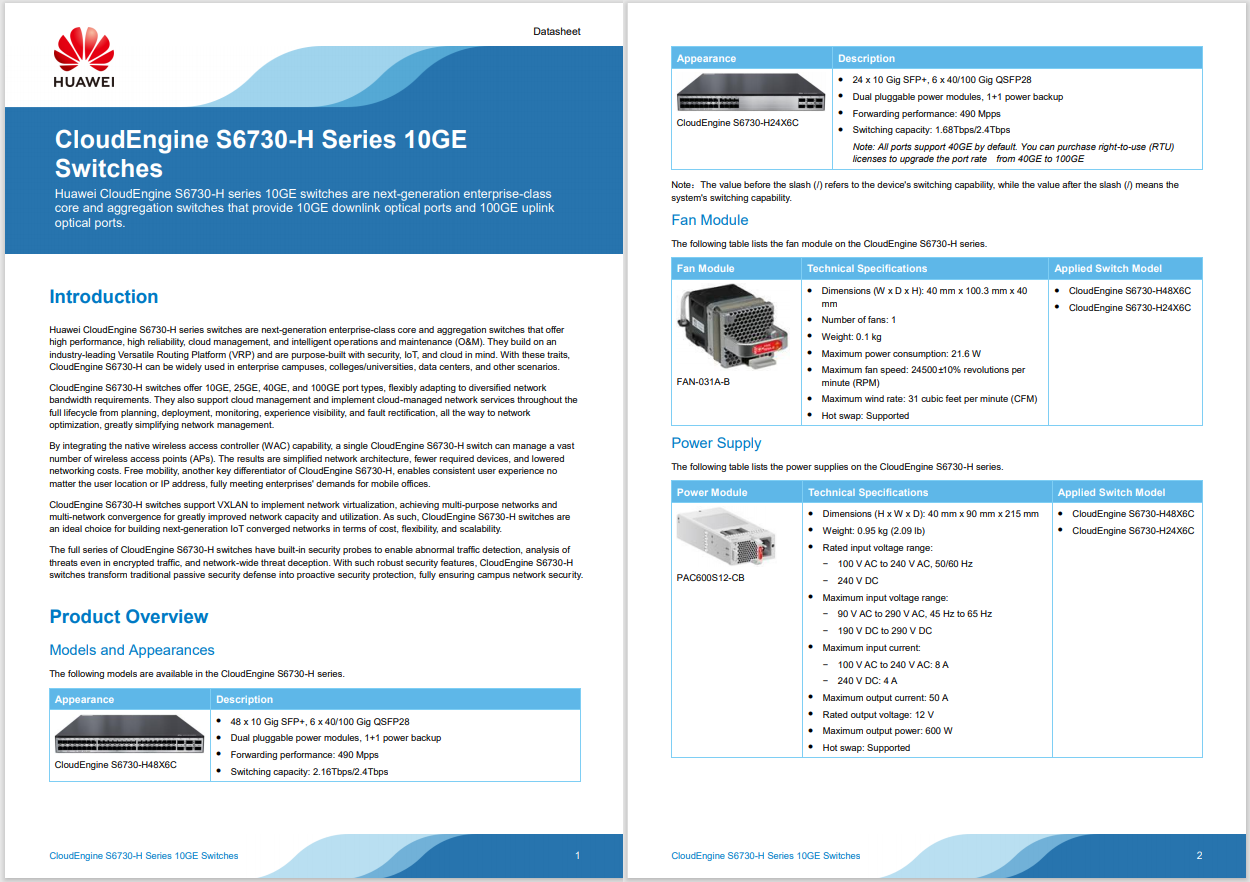

Based on the above mentioned issues, we came up with some requirements that an ML benchmarking platform should have. These requirements are generic and are not dependent on Kubeflow or any other specific system for implementing ML workloads. The picture below shows a schematic of the proposed benchmarking platform.

A benchmarking platform for ML workloads should satisfy,at least,the following requirements:

In order to satisfy the requirements, we came up with the design ofKubebench.

Kubebench is a harness for benchmarking and analyzing ML workloads on Kubernetes.

As shown in the figure, it runs on top of Kubeflow and is hence not directly dependent on the underlying infrastructure. Since it works on Kubeflow and Kubeflow works on Kubernetes (only), Kubebench also works on Kubernetes. This makes the benchmark platform immediately compatible with running benchmarking runs on any Kubernetes platform, allowing the benchmarking of workloads running at massive scale.

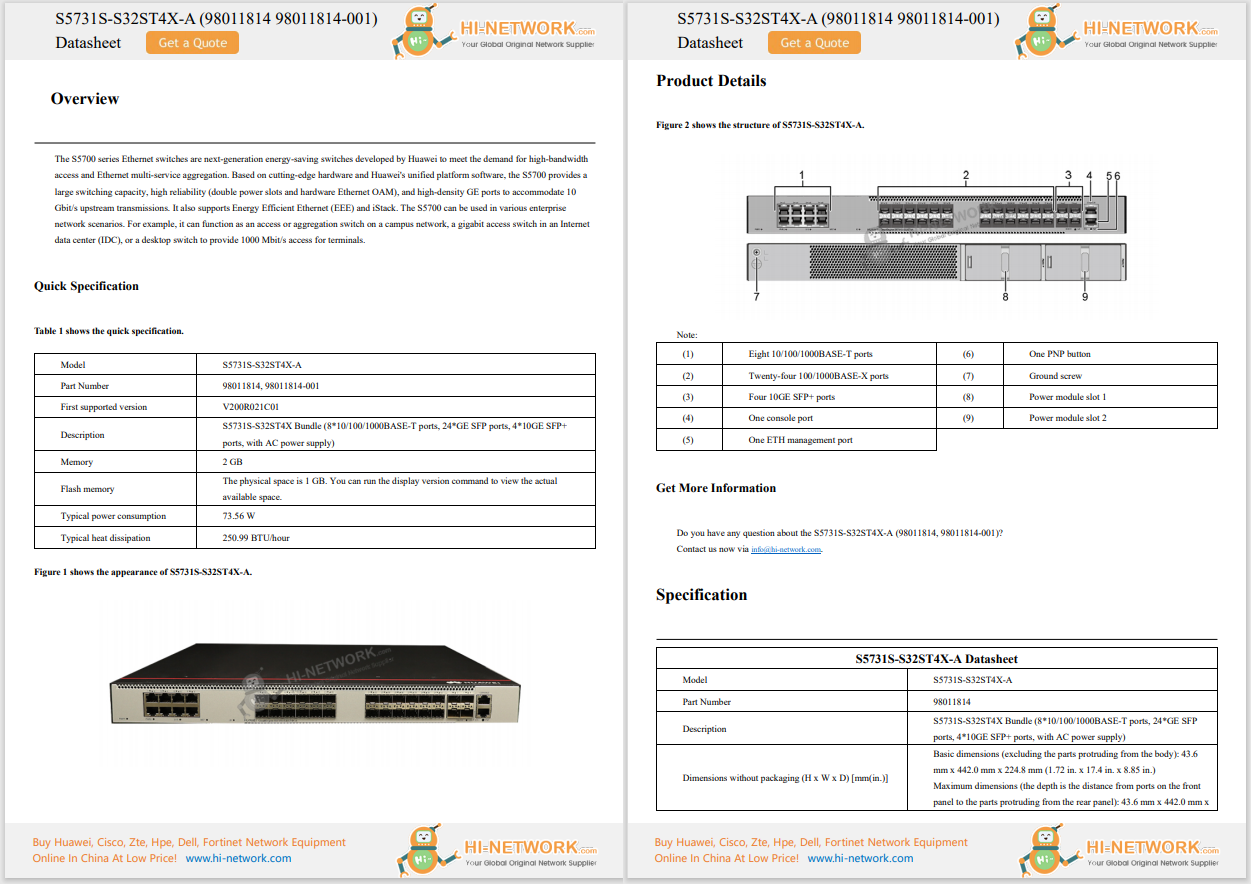

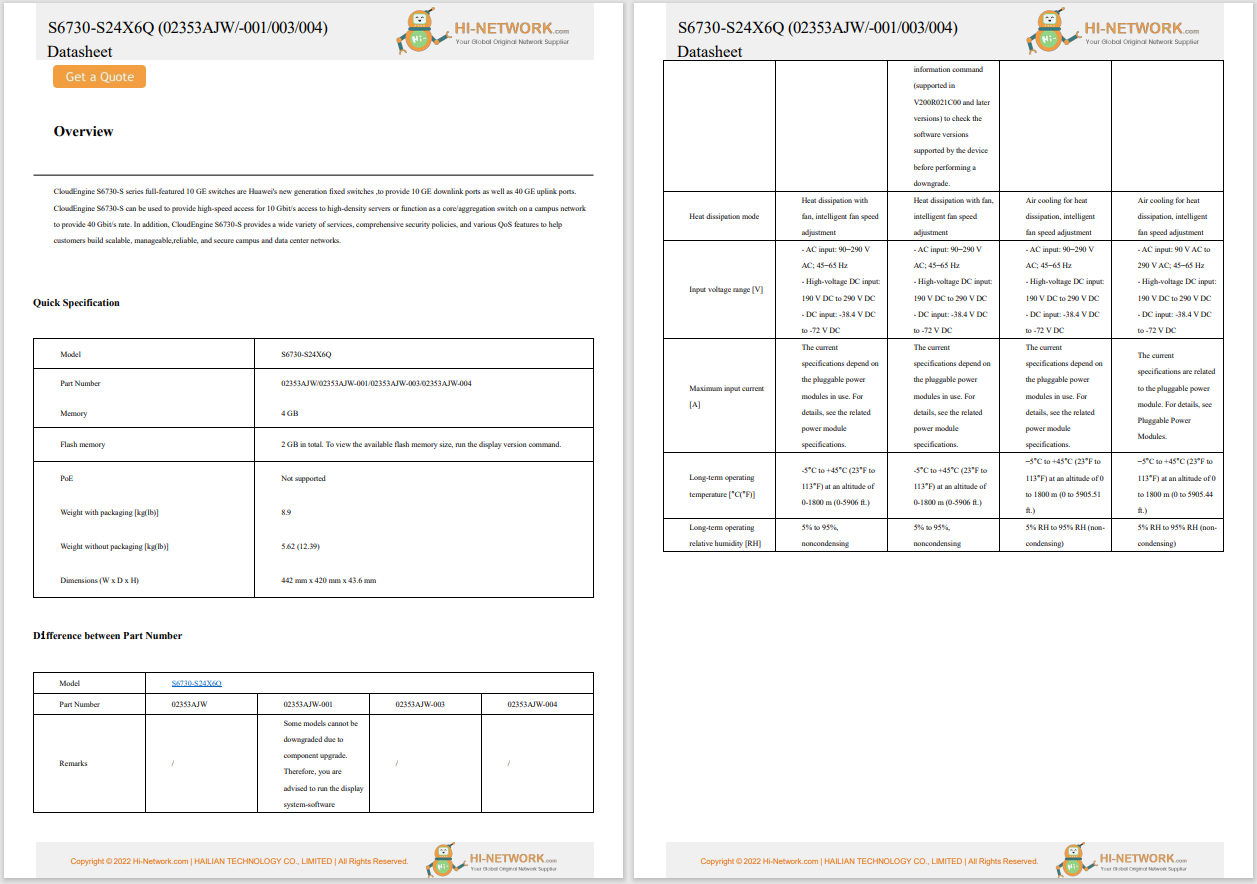

The picture below shows the overall architecture of Kubebench. The parts in yellow are what comes prepackaged in Kubebench and includes:

For more details about Kubebench, interested readers are referred to our KubeCon + CloudNativeConTalk and Slides.

In the current cacophony over Machine Learning (ML), one thing that is often forgotten, or at least not reported often enough, is the benchmarking of ML workloads. Kubebench has been a Cisco led open-source effort to develop an ML benchmarking platform for ML workloads on Kubernetes. We would like to express our deep gratitude to the numerous reviewers and contributors from the Kubeflow project.

Etiquetas calientes:

destacado

#nube

#CiscoDNA

Cisco DNA

#MachineLearning

#consistentAI

kubebench

KubeFlow

benchmarking

Etiquetas calientes:

destacado

#nube

#CiscoDNA

Cisco DNA

#MachineLearning

#consistentAI

kubebench

KubeFlow

benchmarking