For now, instead of architecting ethics directly into the product to avoid future harms, companies still tend to wait until there is a crisis before making amends.

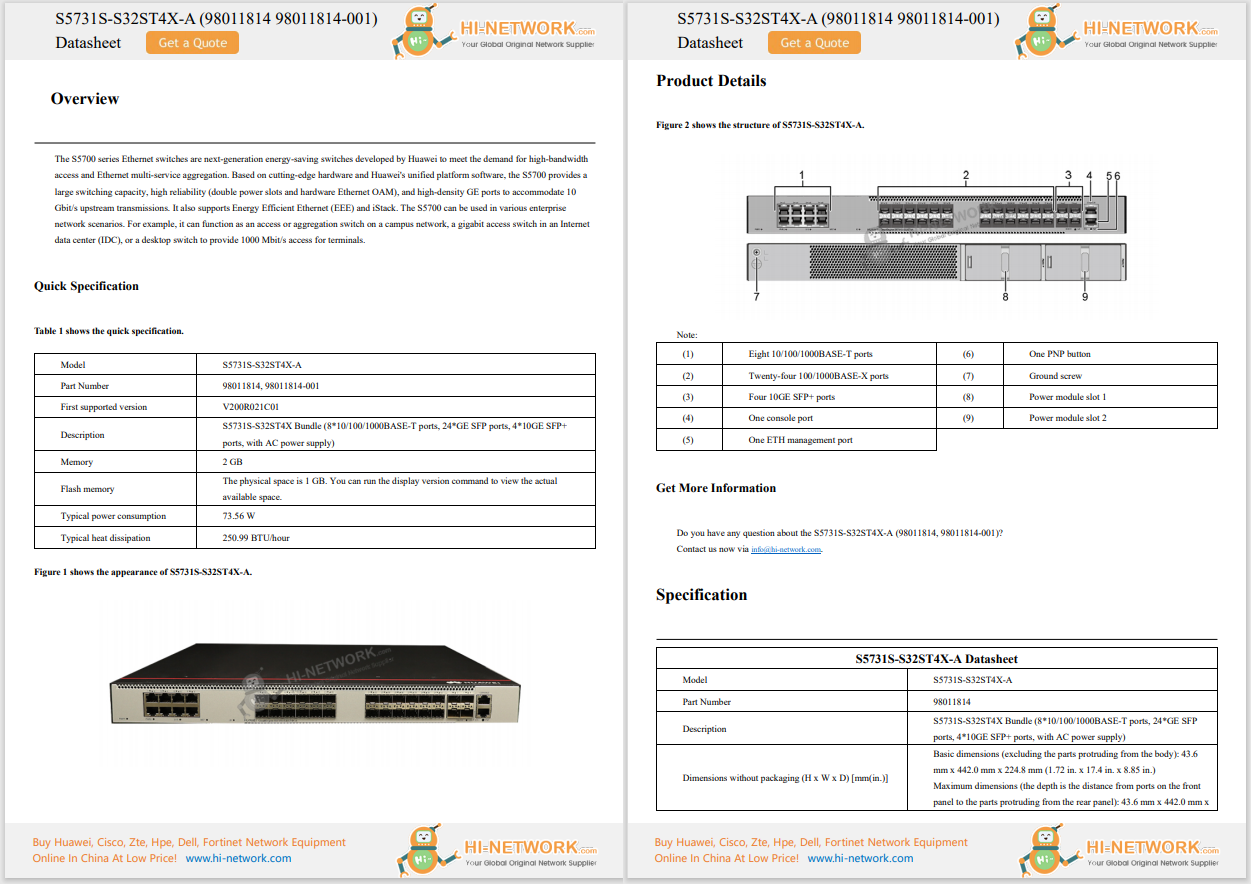

Image: Shutterstock / Kostenko MaximThe concept of ethical technology has always seemed better suited to theoretical talks in conference rooms, but it is now set to become a tangible -and financial -priority for businesses developing digital tools.

According to tech analyst Gartner, in effect, by 2024 the worldwide privacy-driven spending on data protection and compliance technology will exceed$15 billion annually, as digital ethics become increasingly top-of-mind.

Privacy-aware organizations are set to invest in new technologies that better prevent algorithmic harms, says Gartner, ranging from homomorphic encryption, which enables algorithmic computation on encrypted data, to sovereign cloud and synthetic data.

SEE:Preparing for the 'golden age' of artificial intelligence and machine learning

This is driven by the fast development of artificial intelligence (AI) tools, which can have unintended consequences of a much larger scale than previous technologies, according to Gartner analyst Bart Willemsen. Whether it is to write texts, drive a car, or assist with criminal convictions, AI tools have a degree of autonomy, meaning that when things go wrong, they go seriously wrong.

"The autonomous nature of AI, the scale at which AI can have an impact, the speed at which it can have an impact, means that undesigned and undesired consequences have to be thought of earlier," Willemsen tells ZDNet.

Increasingly, the ethical discussion about the potential unforeseen consequences of an AI tool is going to take place before and during a technology's implementation, rather than after, says Gartner.

Although this strategy is slowly emerging, it is still far from reaching the majority of businesses. For now, instead of architecting ethics directly into the product to avoid future harms, companies still tend to wait until there is a crisis before making amends.

In 2018, for example, Amazon had to scrap an automated hiring tool after it was publicly disclosed that the technology had been found to discriminate against women. A year later, Goldman Sachs was faced with a probe over claims that the company's credit algorithm was gender-biased. And even governments have had to backtrack on the use of AI: the UK's Department for Education, for example, recently pulled back an algorithm assigning students' grades after it emerged that it favored children from wealthier schools.

"That is why the ethical question is shifting to: Should we even use this? If yes, to what extent? And then there is a question that was hardly asked until about a few years ago: Are we willing to pull the plug?" says Willemsen.

In some countries, laws are evolving to prompt companies to ask themselves those questions before, rather than after, developing AI tools. But even then, legal processes follow a familiar reactive pattern.

Willemsen points to the EU's latest draft regulations on AI, which include provisions such as prohibiting the use of AI to socially score individuals -an attempt to avoid a replication of the Chinese social credit system, says the analyst -or banning AI tools that can influence an individual's behavior or opinion.

"To me that just says: 'We've seen Cambridge Analytica, we would very much like to not have that happen again,'" says Willemsen. "It's a reaction. We all know where that comes from."

Becoming a certified ethical hacker can lead to a rewarding career. Here are our recommendations for the top certifications.

Read nowGartner expects that more than 80% of companies worldwide will be facing at least one privacy-focused data protection regulation by the end of 2023 -and laws will still play a huge role in reining in harmful algorithms. But to make sure that AI tools don't bring about new harms, companies will have to go one step further than laws designed to prevent what has already happened in the past.

SEE:Report finds startling disinterest in ethical, responsible use of AI among business leaders

Willemsen anticipates three different approaches to digital ethics. Some companies will simply invest to make sure they are complying with the rules; while slightly more pro-active businesses will start thinking about risk mitigation.

"Say you're driving on an open road and you see a sign saying you shouldn't exceed 55 miles per hour," says Willemsen. "Compliance ethics means putting the cruise control on 54 and shutting your eyes. Mitigating risk means continuously looking through the windshield because if 3,000 people in front of you come to a full stop in a traffic jam, you're hitting the brakes."

In both cases, however, the approach is reactive. Implementing true digital ethics will require yet another strategy, says Willemsen: thinking beyond compliance, trying to infuse ethical principles from the very start of the development process for an AI technology, and generating value through those principles. Gartner is optimistic that this is the approach that is gradually becoming mainstream.

Of course, whether companies implementing digital ethics are driven by a true desire to make tech for good, or by the need to avoid public and legal backlash remains to be seen. With the unintended consequences of the technology being more serious in nature, there is also much stronger public outcry when algorithms cause harm -and causing such outcry is in no business's interest.

"You're driven either still by your profits and losses, or by a humanistic perspective," says Willemsen. "It depends on the organization. But as far as I see, in most cases the decisive factor remains growth."

In both cases, the outcome remains the same: companies are moving away from reactive compliance towards proactive privacy by design. An optimistic forecast that could come with huge societal benefits.

Etiquetas calientes:

Inteligencia Artificial

innovación

Etiquetas calientes:

Inteligencia Artificial

innovación